What Are AI User Agents and Should You Allow or Block Them?

Key Highlights

- AI user agents are distinct from traditional web crawlers, collecting data for both real-time search results and training large language models.

- Understanding these agents is crucial as they directly impact your website's visibility within generative AI platforms like ChatGPT and Google Gemini.

- You can manage access for these artificial intelligence bots using your site's

robots.txtfile, allowing or blocking them as needed. - Key user agents include OpenAI's GPTBot, Google-Extended, and Anthropic's ClaudeBot, each with a specific purpose.

- Identifying AI user agents involves checking server logs for specific user-agent strings and verifying their IP ranges.

- Properly managing AI bots ensures your content is cited in AI answers, driving qualified traffic to your site.

The digital landscape is evolving with the rise of AI user agents, specialized bots that interact with your website differently than traditional search engines. These agents are the backbone of new foundation models, enabling them to crawl web pages and process natural language to provide up-to-date, relevant answers. As a website owner, understanding how these agents work is no longer optional. It's essential for ensuring your content is discoverable and accurately represented in the next generation of search and information discovery.

What Are AI User Agents And Why Do They Matter?

AI user agents are sophisticated software systems that leverage artificial intelligence to perform complex tasks on behalf of users. Unlike a simple search crawler that just indexes content, these agents can exhibit reasoning, planning, and memory. They are designed to pursue goals and can make decisions, learn over time, and adapt their actions. This advanced capability is made possible by machine learning and large language models, allowing them to handle multi-step workflows far beyond the scope of traditional bots.

Their importance cannot be overstated. These AI user agents are what power the real-time information retrieval for platforms like ChatGPT and Perplexity. When a user asks a question, an agent is dispatched to search the web and source current information for the answer. This means your website's interaction with these agents directly influences whether your content gets cited and surfaced in AI-driven search results. Managing them effectively is key to getting your brand in front of a growing, highly engaged audience using AI for discovery.

How Does AI User Agents Differ from Traditional Web Crawlers?

AI user agents operate with a level of intelligence and purpose that sets them apart from a traditional web crawler. A standard crawler, like Googlebot, systematically indexes the web to build a search database. In contrast, an AI agent often acts in real time to answer a specific user query.

Agentic AI User Interface vs. Traditional Search Bot Behavior

The primary difference lies in their purpose. A traditional search bot from search engines like Google methodically crawls and indexes web pages to build a massive, searchable database. Its behavior is automated, ongoing, and not tied to a specific, immediate user request. The bot's "goal" is to map the internet for future searches.

Agentic AI, on the other hand, often acts as a direct extension of a user's intent within a user interface like ChatGPT. When a user poses a question that requires current information, the agentic system dispatches a bot through a browser environment to fetch specific data in real time. This behavior is reactive and goal-oriented, designed to fulfill an immediate information need.

This means the interaction is more like a user-initiated action than an automated crawl. The agent is tasked with finding a specific answer, not just indexing a page. This fundamental shift from passive indexing to active, on-demand retrieval changes how websites are accessed and utilized by AI systems.

Unique AI Agent User Experiences and Data Collection Patterns of AI Bot User Agents

AI bot user agents introduce new data collection patterns that directly influence user experiences on generative AI platforms. Unlike the singular goal of indexing for search, these agents have multiple purposes, which impacts how they interact with your web pages.

Their data collection activities can be broadly categorized:

- Real-time Retrieval: Bots like ChatGPT-User fetch content on-demand to answer a user's specific question, providing fresh information in AI responses.

- Model Training: Agents such as GPTBot crawl the web to gather vast amounts of training data, which is used to build and refine the underlying large language models.

- Search Indexing: Some AI platforms, like Perplexity, use their own crawlers (e.g., PerplexityBot) to build a dedicated search index to power their answer engines.

This multi-faceted approach means your content can be used in different ways, impacting both immediate search results within AI chats and the long-term knowledge base of the models themselves. When you update a page, that new information can be reflected in AI answers almost instantly during real-time retrieval, a significant departure from the slower indexing process of traditional search engines.

What Are the Most Important AI User Agents You Should Know?

As AI-powered search becomes more prevalent, recognizing the key AI user agents visiting your site is essential. These bots are not a monolithic group; they come from different companies and serve distinct functions, from real-time data retrieval to model training. Each search crawler identifies itself with a unique user-agent string in your server's access logs.

Let's look at the most prominent players.

1. OpenAI User Agent (GPTBot, OAI-SearchBot, ChatGPT-Browser)

OpenAI, the company behind ChatGPT, deploys several distinct AI user agents, each with a specific role. These bots are crucial for how your content appears in and informs one of the world's most popular AI platforms. Understanding their functions is the first step to managing your site's presence in the OpenAI ecosystem.

The most critical agents are used for real-time retrieval, search indexing, and training data collection. Publicly stated, OpenAI does not use data collected by its real-time or search bots for training its LLMs. You can identify and manage them by their user-agent strings.

Here is a breakdown of OpenAI's main user agents:

User Agent Token

Description

ChatGPT-User

Fetches pages on-demand when a user or Custom GPT initiates a request. This is for real-time retrieval.

OAI-SearchBot

Powers ChatGPT's specific search and citation features, helping pages appear in search results.

GPTBot

Crawls web content specifically for training OpenAI's generative AI models like GPT-4o and future versions.

ChatGPT-Browser

An older bot used for real-time web access from within ChatGPT conversations.

2. Google AI User Agents (Gemini, Google-Extended, GoogleAgent-Mariner)

Google's approach to AI user agents is deeply integrated with its existing search infrastructure. Given its access to the vast Google search index, its AI products like Gemini and AI Overviews operate differently from other platforms. They primarily rely on the established Googlebot crawler for much of their data.

However, Google has introduced a specific user-agent token, Google-Extended, to give webmasters control over whether their content is used for training generative models. This is not a separate search crawler but a directive you can use in your robots.txt file. Blocking Google-Extended may prevent your content from being used to train Gemini models.

For its more advanced agentic AI, such as Project Mariner, Google uses GoogleAgent-Mariner. This agentic browser is available to premium subscribers and operates in the cloud. Regular AI Overviews, on the other hand, respect the same rules as the standard Googlebot, so if you are accessible to Google Search, you are accessible to AI Overviews.

3. Anthropic User Agents (Claude-Web, ClaudeBot, anthropic-ai)

Anthropic, the developer of the AI assistant Claude, also utilizes a set of user agents to gather data and power its services. While Claude historically did not have live web access, newer versions can now browse the web to provide real-time information and citations, making its bots increasingly important for website owners.

Anthropic uses different agents for training its large language models and for servicing live user chats. anthropic-ai is the primary crawler that collects public web data for model training. If you wish to opt out of having your content used to train future Claude models, you can block this user agent in your robots.txt file.

For real-time interactions, such as when a user asks Claude a question that requires current web data, the ClaudeBot user agent is used. Allowing this bot ensures your pages can be cited in Claude's answers. A third agent, claude-web, also appears to be used for web content fetching. Fortunately, Anthropic has stated its crawlers respect robots.txt directives.

4. Meta AI User Agents (FacebookBot, Meta-ExternalAgent, meta-webindexer)

Meta is rapidly integrating AI into its products like Instagram, WhatsApp, and Facebook, with Meta AI now a core feature. To power these experiences, Meta employs several AI user agents that crawl the web, but their functions can sometimes be ambiguous.

The FacebookBot (and its older version facebookexternalhit) has traditionally been used to generate link previews when a URL is shared on Meta's platforms. However, it has also been used for real-time content retrieval for AI search. The meta-externalagent is documented as being for search crawling and training data collection, while meta-webindexer appears to be building an independent search index.

Unfortunately, Meta does not currently offer a clear way to distinguish between bots collecting training data and those servicing real-time user requests. This makes it difficult to block one without potentially impacting the other. You can identify these agents by their names in your server logs, but granular control remains a challenge.

5. Perplexity AI User Agents (PerplexityBot, Perplexity Stealth, Perplexity-User)

Perplexity AI, a popular AI-powered answer engine, uses its own web crawler to index content for its search results. The primary agent for this is PerplexityBot. Allowing this user agent helps ensure your content can be surfaced and cited in Perplexity's answers, driving traffic back to your site.

Perplexity also uses Perplexity-User, an agent triggered when a user clicks a citation or directly asks the AI to summarize a specific URL. The company states this agent may ignore robots.txt rules because it is considered a direct user-initiated action rather than an automated crawl.

The platform has faced some controversy regarding its crawling practices. Some analyses suggest Perplexity also uses "stealth" agents, which are headless browsers with standard Chrome user agents, to bypass robots.txt blocks. This makes it challenging to control their access completely, as these bots can be indistinguishable from regular human traffic in server logs.

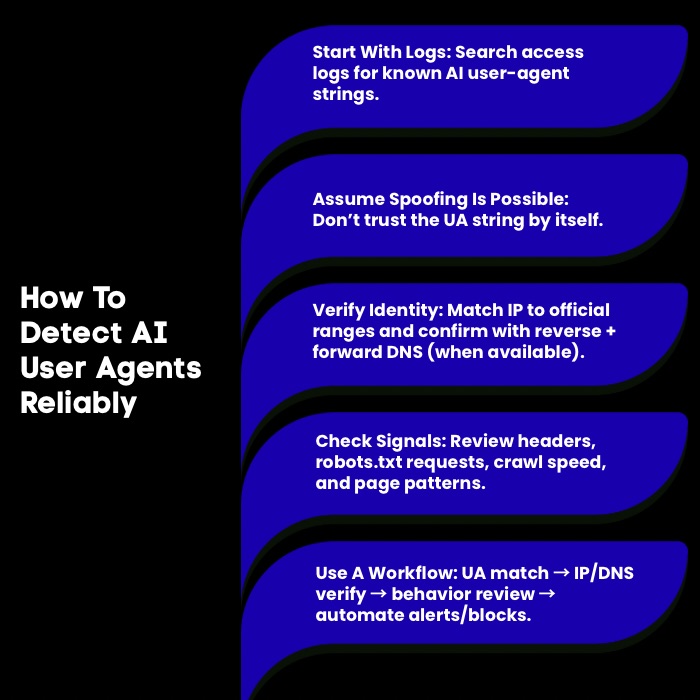

How Can You Reliably Detect AI User Agents On Your Website?

Reliably detecting AI user agents requires a multi-pronged approach because simply trusting the user-agent string is not always enough. The most direct method is to analyze your server logs for known AI web crawler names like GPTBot or PerplexityBot. However, bad actors can engage in user-agent spoofing, where they disguise their bots as legitimate crawlers.

1. Identifying AI Agent User Interfaces in Server Logs

Your website's server logs are the primary source for identifying visiting AI user agents. These logs record every request made to your server, including the user-agent string that identifies the client, which could be a browser, a search crawler, or an AI bot. By filtering or searching these logs, you can spot the signatures of AI activity.

To start, you should look for specific, documented user-agent names. Common identifiers include:

GPTBot,ChatGPT-User, andOAI-SearchBotfrom OpenAI.PerplexityBotandPerplexity-Userfrom Perplexity.ClaudeBotandanthropic-aifrom Anthropic.

You can use simple command-line tools like grep to quickly search your access logs for these strings. For example, running a command to search for "gptbot" or "claudebot" will show you every time those crawlers have accessed your site. This initial analysis provides a baseline understanding of which AI user interfaces are interacting with your content.

2. User-Agent Spoofing Risks

While server logs offer useful data, relying only on user-agent strings is risky due to user-agent spoofing. Malicious bots can pose as legitimate ones like Googlebot or GPTBot to bypass security and access restricted content. This puts website administrators at risk: rules meant for real AI crawler user agents can be exploited by fake bots to scrape content, overload servers, or cause other problems.

You may think you're allowing OpenAI’s crawler but could actually let in an unknown third party. Because of this, you need a strong verification process. Don’t trust user-agent strings alone; use extra checks to confirm a bot’s identity and protect your server from unauthorized access disguised as legitimate AI traffic.

3. IP And Reverse DNS Checks

To counter user-agent spoofing, IP and reverse DNS checks are a critical part of your verification workflow. Many major AI companies, including OpenAI and Google, publish the IP ranges from which their official crawlers operate. By cross-referencing the IP address of an incoming request with these published lists, you can verify its authenticity.

The verification workflow typically involves these steps:

- Run a reverse DNS lookup on the IP address from your server logs. Check if the domain name belongs to the company the user agent claims to represent (e.g.,

crawl-*-*-*-*.openai.com). - Perform a forward DNS lookup on the domain name you retrieved. Verify that it resolves back to the original IP address of the request.

- Check against published IP ranges to confirm the address is part of the official network for that search crawler.

This two-way check ensures that the bot is not only coming from a seemingly legitimate IP but also that the IP address itself is properly configured to represent that service. This makes it significantly harder for a spoofer to successfully imitate a legitimate AI user agent.

4. Header And Pattern Signals

Beyond user agents and IP addresses, you can analyze other header and pattern signals in your server logs to identify AI user agents. Legitimate bots often exhibit consistent behavior, while malicious or poorly configured bots may deviate from these norms. Examining these patterns can help you spot suspicious activity.

Look at the full HTTP request header. Official crawlers often include specific information, such as a URL pointing to a page with more information about the bot (e.g., +https://openai.com/bot). The absence of such a link, or a link to a suspicious domain, can be a red flag. Analyze the bot's crawling behavior—does it request your robots.txt file before accessing other pages? Reputable bots almost always do.

Furthermore, observe the request frequency and the types of pages being accessed. An AI bot collecting data might crawl at a steady, machine-like pace, whereas a real user's behavior is more erratic. Unusually aggressive crawling from an unrecognized IP, even with a legitimate-looking user agent, could signal a scraper. These behavioral patterns provide another layer of data for detection.

5. Verification Workflow

Establishing a comprehensive verification workflow is the most reliable way to manage AI user agents. This process combines multiple detection techniques to accurately distinguish legitimate bots from impostors. Relying on a single signal is risky, but a layered approach provides strong, dependable bot detection.

A robust workflow should include the following steps:

- Initial User-Agent Check: Filter server logs for known AI bot user-agent strings to create a preliminary list of potential AI traffic.

- IP and Reverse DNS Verification: For each request, perform reverse and forward DNS lookups to confirm the IP address matches the claimed domain. Cross-reference the IP with the company's published IP ranges.

- Behavioral Analysis: Analyze crawling patterns. Look for

robots.txtrequests, consistent crawling speeds, and logical navigation paths. Deviations can indicate a fake bot.

For more advanced needs, you can implement automated systems that use machine learning to analyze these signals in real time. These bot detection solutions can automatically identify and block suspicious traffic that mimics AI user agents, saving you from manual analysis and protecting your site from scrapers.

When Should You Allow Or Block AI User Agents?

Deciding when to allow or block AI user agents depends on your business goals and content strategy. It's not an all-or-nothing choice. Allowing certain agents can increase your visibility and drive qualified traffic from AI platforms, while blocking others can protect your proprietary data and reduce server load.

The key is to create selective access rules. You might want to allow a real-time retrieval web crawler like ChatGPT-User to ensure your content is citable, but block a training data collector like GPTBot if you don't want your content used to train future models. Understanding the purpose of each agent is crucial to making the right decision.

When To Allow

Allowing AI user agents is often beneficial, especially if a key business goal is to increase brand visibility and attract a qualified audience. When platforms like ChatGPT or Perplexity can access your content in real time, they can cite your pages as sources in their answers, creating a new and powerful referral channel.

You should generally allow AI user agents when:

- You want to be cited in AI search results: Users who click through from an AI-generated answer are often highly qualified and more likely to convert. Allowing real-time retrieval bots like

ChatGPT-UserandPerplexityBotmakes this possible. - Your content benefits from broad exposure: If you want general information about your brand, products, or services to be widely known by AI models, allowing training bots can help ensure you are represented accurately across a range of inquiries.

For most organizations, especially those not primarily monetizing content through subscriptions, the benefits of being discoverable in AI environments outweigh the risks. Allowing access ensures you are part of the conversation as these platforms become a dominant way for users to find information.

When To Block

While allowing AI bots has its advantages, there are strategic reasons to block certain AI user agents. The decision to block often revolves around protecting intellectual property, managing server resources, and controlling how your data is used. Not all AI bot traffic is beneficial for every website.

Consider blocking AI bots under these circumstances:

- Your content is proprietary or behind a paywall: If your business model relies on selling access to content, you likely want to block data collection from bots used for model training to prevent them from learning and reproducing your valuable information for free.

- You want to prevent model training on your data: If you are concerned about large language models being trained on your content without compensation or permission, you can block training-specific bots like

GPTBotandCCBot. This can be done without affecting your visibility in real-time AI search results.

Blocking can also be a practical measure to prevent routine tasks performed by bots from overwhelming your server, especially if you see aggressive crawling from agents that provide no clear benefit. A targeted blocking strategy allows you to protect sensitive content while still engaging with beneficial AI traffic.

Indexing Vs Training Vs Browsing

It is crucial to understand the three primary functions of AI user agents: indexing, training, and browsing. Each activity impacts your website differently, and you can control them separately.

Indexing is similar to what a traditional web crawler does. Bots like OAI-SearchBot or PerplexityBot crawl your content to build a search database specifically for their AI-powered search engines. Allowing this is essential if you want your pages to appear in their search results.

Training is the process where bots like GPTBot or ClaudeBot collect massive amounts of data from the web to build and refine their foundation models. This data becomes part of the model's general knowledge. You can opt out of this process by blocking these specific bots. Finally, browsing (or real-time retrieval) is when a bot like ChatGPT-User fetches a page on-demand to answer a live user query. This is a direct, user-initiated action and is critical for getting your most current content cited.

Selective Access Rules

Yes, you can and should use selective access rules to manage AI user agents. The most common and effective tool for this is your website's robots.txt file. This simple text file, placed at the root of your domain, allows you to specify rules for individual bots or groups of bots.

You can implement a granular strategy to align with your business processes. For example:

- Allow beneficial bots: You can explicitly permit crawlers that drive traffic, like

OAI-SearchBotandPerplexityBot, to access your entire site or specific sections. - Block training bots: If you want to prevent your content from being used to train AI models, you can disallow agents like

GPTBotandCCBot. You can even block them from specific directories, like a/private/folder, while allowing them to access general content.

This selective approach gives you the power to control your content's destiny in the AI ecosystem. You can welcome the search crawler bots that help with discovery while turning away the data collectors if that fits your strategy, all through simple robots.txt directives.

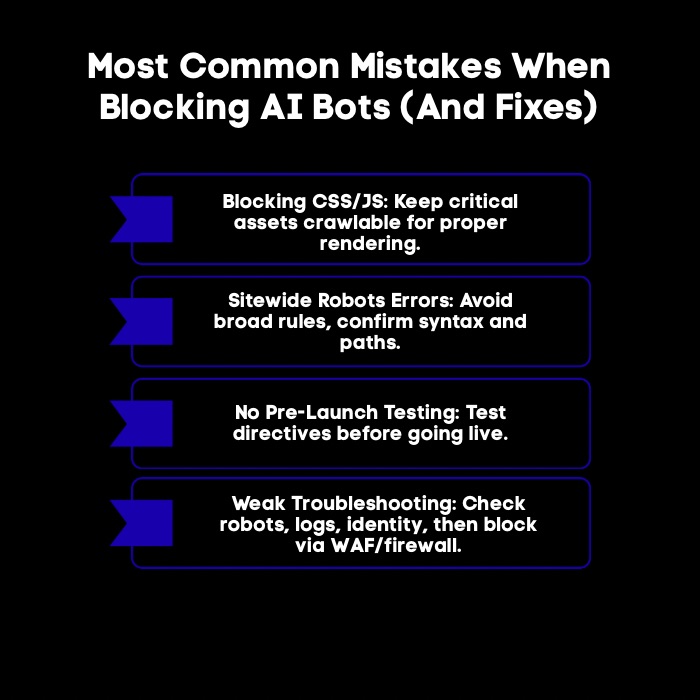

What Are the Most Common Mistakes When Blocking AI Bots, And How Do You Fix Them?

When blocking AI bots, website owners often make simple mistakes that can have unintended negative consequences, such as hurting their visibility in both AI and traditional search. Let’s look at the most common mistakes in detail:

1. Blocking CSS/JS

A common mistake website admins make is blocking access to CSS and JavaScript files, often by disallowing entire directories. This prevents web crawlers, including Google’s, from fully rendering your pages and understanding layout and content. If crawlers can’t access these files, they may see only unstyled HTML, leading to incorrect indexing and poor search results. As bots’ JS capabilities improve, it’s essential to allow crawlers access to the CSS and JS needed for proper page rendering.

2. Accidental Sitewide Blocks

A common and damaging error is an accidental sitewide block caused by a typo in the robots.txt file. For example, a misplaced slash in a Disallow directive can unintentionally prevent search engines like Googlebot from crawling your entire site, leading to complete de-indexing.

To avoid this, carefully review your robots.txt for overly broad Disallow rules and specify only the exact directories you want to block. Regularly validate your robots.txt file to catch mistakes before they harm your site's visibility.

3. Testing Changes

Never deploy changes to your robots.txt file without testing them first. A small syntax error can have major consequences for your site's visibility, so a thorough verification workflow is a non-negotiable step. Testing ensures that your rules work as intended, blocking the right AI bots while allowing the ones you want.

There are several excellent tools available for this purpose.

- Use online

robots.txttesters: Tools like Merkle's robots.txt Tester allow you to paste yourrobots.txtcontent and test it against specific user-agent strings and URLs. This helps you confirm whether a rule is allowing or blocking access correctly. - Leverage AI Search Consoles: Emerging tools like Knowatoa's AI Search Console can test your site against a wide range of AI user agents simultaneously, flagging any access issues and streamlining your audit process.

Integrating these tests into your business processes before pushing changes live will prevent costly mistakes. It allows you to confidently manage bot access, knowing your directives are correctly implemented and won't accidentally harm your SEO efforts.

4. Troubleshooting Checklist

When you suspect an issue with how AI bots are interacting with your site, a systematic troubleshooting checklist can help you quickly identify and resolve the problem. Instead of guessing, follow a structured process to diagnose whether you have incorrectly blocked a bot or if a bot is ignoring your rules.

Start with this checklist:

- Verify your

robots.txtfile: Use arobots.txttesting tool to confirm that your rules for the specific user agent in question are correct. Check for syntax errors, typos, or overly broadDisallowdirectives that might be causing the issue. - Analyze server logs: Search your server logs for the user agent you are troubleshooting. Do you see any hits from it? If the bot you expect is absent, it's likely being blocked. If it's present but accessing pages it shouldn't, it might be ignoring your rules. Check the IP address to verify its identity.

If a bot appears to be ignoring your robots.txt file, the next step is to escalate. Legitimate bots should obey your rules, but if they don't, you may need to implement firewall rules to block the bot by its IP address. This checklist helps you move from simple configuration checks to more advanced enforcement actions.

Scalenut: Where AI Visibility Meets SEO Execution

Allowing or blocking AI user agents is only step one. The real win is knowing whether your brand is getting mentioned in AI answers, why it is not, and what to do next. That’s where Scalenut comes, because it combines GEO tracking with SEO execution in one platform.

- Track AI Mentions And Share Of Voice: See how often you appear in ChatGPT and Perplexity, plus Visibility Score, Average Position, and Share Of Voice.

- Prompt And Citation Insights: Find which prompts trigger mentions and which sources AI cites to justify answers.

- Actionable GEO Recommendations: Turn visibility gaps into next steps with content ideas, authority/backlink suggestions, and engagement opportunities.

- AI Traffic Signals: Spot AI-driven visits, top AI sources, and the most referenced pages.

Want to see how Scalenut tracks your AI visibility and turns it into an execution plan? Book a demo and get a walkthrough tailored to your site and competitors.

Conclusion

Understanding AI user agents is crucial for managing your website’s interactions with these advanced technologies. By recognizing the differences between AI user agents and traditional web crawlers, you can create strategic approaches to allow or block specific bots as needed. With knowledge of the important AI user agents and their behaviors, you can enhance user experience and optimize your site's indexing capabilities.

Remember, making informed decisions about which AI agents to allow can significantly impact your site’s visibility and functionality. If you want to dive deeper into this topic, don’t hesitate to reach out for a consultation to ensure your website is well-prepared for the evolving digital landscape.

Frequently Asked Questions

How do AI user agents impact website search and indexing?

AI user agents impact search by enabling real-time content retrieval for AI-powered search engines. Unlike traditional indexing, these agents can fetch information from your web pages on-demand to generate immediate answers. Allowing access to the right search crawler ensures your site is cited in these new search results, driving qualified traffic.

Can I prevent specific AI user agents from crawling my site?

Yes, you can prevent specific AI user agents from crawling your site using your robots.txt file. By adding a Disallow rule for a particular user agent, you can instruct that web crawler not to access your entire site or specific directories, giving you selective access control over which bots can interact with your content.

Do AI user agent crawlers follow robots.txt directives like other bots?

Most reputable AI user agents, like those from OpenAI user agent and Google, state that they follow robots.txt directives, similar to traditional bots like Googlebot. However, some bots, particularly those performing user-initiated actions or less reputable crawlers, may ignore these rules. It is always best to monitor your server logs to verify a bot's behavior.

What are the best user interfaces in enterprise AI agent development?

The best user interfaces in enterprise ai agent development combine transparency, control, and auditability. Look for interfaces that show agent steps, sources, tool calls, and permissions, with clear human override options. Enterprise-grade UX also needs role-based access, logging, and governance workflows.

What are the benefits of lifelike AI agents for user experience?

The benefits of lifelike ai agents for user experience include higher engagement, better comprehension, and smoother task completion. When agents feel natural, users ask clearer questions and trust guidance more. The key is balancing personality with accuracy, speed, accessibility, and consistent responses.

What is a practical list of AI user agents website owners should recognize?

A useful list of ai user agents includes GPTBot, OAI-SearchBot, ChatGPT-User, Google-Extended, ClaudeBot, anthropic-ai, PerplexityBot, Perplexity-User, FacebookBot, Meta-ExternalAgent, and meta-webindexer. Track them in server logs, then decide allow or block rules via robots.txt or firewall policies.

.jpg)

.webp)