Chain of Thought vs Prompt Chaining: How Are They Different?

Key Highlights

- Prompt engineering techniques like Chain of Thought (CoT) and prompt chaining enhance how large language models handle complex tasks.

- The key differences lie in their structure; CoT uses a single prompt to guide a reasoning process, while prompt chaining uses a sequence of prompts.

- Chain of thought prompting excels at tasks requiring logical deduction and a clear reasoning process to arrive at a correct answer.

- Prompt chaining is ideal for multistage content creation, breaking down large projects into manageable steps for iterative refinement.

- Combining these methods can optimize AI workflows, improving outcomes for everything from data analysis to creative content generation.

In the evolving field of artificial intelligence, prompt engineering is vital for getting accurate and useful outputs from language models. Two advanced techniques, Chain of Thought (CoT) prompting and prompt chaining, have emerged as powerful methods to enhance AI performance. While both guide an AI's response, they differ in their approach and application. Understanding these methods is key to unlocking the full potential of generative AI, especially for tasks that demand complex logical reasoning and structured problem-solving.

What is Chain of Thought Prompting in LLMs?

Chain of Thought (CoT) prompting is a prompt engineering technique designed to improve the performance of large language models (LLMs), particularly on complex tasks. It guides the model through a step-by-step reasoning process, encouraging it to "think out loud" in natural language before providing a final answer. This approach simulates a more human-like thought process.

Unlike prompt chaining, which involves multiple separate prompts, the main difference with CoT is that it elicits this entire reasoning process within a single prompt. This method has various use cases in natural language processing, especially for problems that require multistep logical deduction.

How Does Chain of Thought Reasoning Processes Work?

The chain of thought reasoning process simulates a human-like approach to problem-solving. It works by breaking down complex problems into a series of manageable, intermediate steps that logically lead to a conclusion. Instead of jumping directly to an answer, the language model articulates its thought process, showing each reasoning step it takes.

This method typically involves adding a simple instruction to your prompt, such as "explain your answer step-by-step." This small addition encourages the LLM to detail the series of intermediate steps it used to arrive at its conclusion. This makes the model's reasoning transparent and easier to follow, which is a key benefit.

By leveraging exemplar-based prompts that illustrate this reasoning process, you can guide the model to generate similar logical chains for new tasks. This enhances its ability to tackle complex problems that require a structured thought process, making it a powerful tool for improving accuracy and reliability.

What Are the Benefits of Chain of Thought LLM Techniques?

Using Chain of Thought (CoT) prompting techniques with reasoning models offers significant advantages, especially for complex tasks that demand strong logical reasoning. This approach improves the overall output quality by encouraging a structured, step-by-step thinking process rather than a direct, and potentially flawed, answer.

One of the primary benefits is enhanced transparency. The generation of intermediate steps provides a clear window into how the model reached its conclusion. This makes it easier to understand and debug the AI's logic. For complex problem solving, CoT is often better than prompt chaining because it fosters a cohesive, uninterrupted reasoning flow within a single context, reducing the chances of logical errors.

Here are some key benefits:

- Improved Accuracy: Breaking problems down into simpler, logical steps leads to more accurate and reliable answers.

- Enhanced Transparency: The intermediate steps make the AI's decision-making process understandable for users.

- Better Multistep Reasoning: It systematically tackles each part of a problem, excelling in tasks requiring sequential logic.

What Is an Example of Chain of Thought Prompting?

An excellent example of CoT prompting can be seen when solving a math word problem. Instead of asking for just the final answer, you prompt the model to show its work. For instance, if you ask, "If you buy 5 apples and already have 3, how many do you have in total?" using CoT prompting, the model would first lay out the intermediate reasoning steps.

The model might respond in natural language with a series of intermediate steps like: "Start with 3 apples," followed by, "Add 5 apples to the existing 3." It then concludes with the correct answer, "Total apples = 8." This process demonstrates the model's logical path from the initial state to the solution.

This method forces the model to articulate its reasoning, which often leads to a more accurate outcome compared to a direct query. By detailing the steps taken, the CoT approach ensures the final answer is not just a guess but the result of a logical, traceable process.

Want to know which prompt types work best in real AI applications? Read what are the different types of prompts in AI applications to pick the right format faster.

What is Prompt Chaining?

Prompt chaining is a prompt engineering method that breaks down large, complex tasks into a sequence of smaller, more focused prompts. Each prompt in the chain addresses a specific subtask, and its output is often used as the input for the next prompt. This approach allows the AI model to concentrate on one manageable step at a time.

This technique improves AI-generated outputs by ensuring each part of a complex project receives dedicated attention, leading to higher-quality and more refined results. Its use cases are common in content generation, data analysis, and other multi-stage AI workflows where iterative development is beneficial.

How Does Prompt Chaining Work?

Prompt chaining functions by dividing a complex task into a series of manageable steps, with each step being handled by a distinct prompt. You start by creating a prompt for the first subtask. The output generated from this initial prompt is then used as the input, or context, for the next prompt in the sequence.

This creates a prompt chain where each link builds upon the last. For example, in a content creation workflow, the first prompt might generate an blog outline, the second might draft the introduction based on that outline, and a third could develop the main body paragraphs. This sequential process allows for a focused and structured approach to generative AI tasks.

By structuring AI workflows this way, you guide the model through a project one piece at a time. This modular approach makes it easier to control the outcome, review progress at each stage, and refine specific parts of the task without having to rerun the entire process.

What Are the Benefits of Prompt Chaining?

Prompt chaining offers significant advantages for various AI applications, particularly those involving content creation and multi-step processes. By breaking tasks into manageable parts, this technique allows for greater focus and precision at each stage, which can dramatically improve the quality of the final output.

This method is ideal for iterative refinement. Since each step is a separate prompt, you can easily troubleshoot and adjust a specific part of the process without affecting the others. This makes it simpler to identify and fix issues, saving time and resources. You should use prompt chaining for tasks that can be naturally divided into sequential stages, such as drafting and editing a document.

Key benefits of prompt chaining include:

- Increased Focus: The AI model concentrates on smaller steps, leading to higher-quality results for each subtask.

- Easier Troubleshooting: Isolating issues within a specific prompt is much simpler than debugging a single, large prompt.

- Improved Scalability: It streamlines content workflows, enabling faster and more scalable production.

- Enhanced Collaboration: The clear, sequential process makes it easier for teams to contribute to and understand AI-driven projects.

What Is an Example of Prompt Chaining?

A practical example of prompt chaining in AI content generation is the task of summarizing a long article. Instead of one complex command, you use a series of prompts to guide the AI model through the process. Each prompt triggers a separate API call, building on the previous output.

First, you might use a prompt to create an initial draft summary: "Read the provided article and generate a rough summary of its main points." The output of this is then fed into the next prompt, which could be: "Critique this summary, identifying areas for improvement or clarification."

A third prompt would then check for factual errors against the original text. Finally, a fourth prompt incorporates all the feedback to produce a polished and accurate summary. This prompt engineering technique is effective even with smaller models, as it breaks the task into focused, manageable steps, ensuring a higher-quality result than a single, all-encompassing prompt might achieve.

Chain of Thought vs Prompt Chaining: What Are the Core Differences?

Chain of Thought (CoT) and prompt chaining differ in structure and purpose. CoT uses a single prompt to guide the model through an internal, step-by-step reasoning process, improving logical coherence. AI prompt chaining breaks a complex task into a sequence of prompts, creating an external workflow where each step builds on the previous one. CoT emphasizes internal reasoning, while prompt chaining structures external task completion. These differences between prompt chaining vs Chain of Thought determine which method works best for specific tasks.

Goal And Output

The primary goal of Chain of Thought (CoT) prompting is to improve the logical accuracy of the final answer by exposing the model's reasoning process. Instead of a direct response, the output includes the intermediate steps the model took to get there. This is particularly beneficial for tasks where the journey to the answer is as important as the answer itself, enhancing transparency and allowing for easier debugging.

Prompt chaining, however, aims to improve output quality by breaking a large task into smaller, sequential steps. Each prompt generates a piece of the final product, and the goal is to refine the output at each stage. This is common in content creation, where an initial draft is critiqued, fact-checked, and polished through a series of prompts. The focus is on building a high-quality output piece by piece.

Feature

Chain of Thought (CoT)

Prompt Chaining

Goal

Improve reasoning accuracy

Deconstruct a complex task

Output

A single response including the reasoning process

A series of outputs from multiple prompts

Focus

Internal thought process

External workflow management

Structure And Inputs

The structure of Chain of Thought (CoT) prompting is centered around a single, comprehensive input. This prompt is designed to trigger a model's internal logical reasoning capabilities, asking it to generate not just an answer but also the intermediate steps that led to it. The entire process, from problem to solution, is contained within one interaction.

Conversely, prompt chaining is defined by its multi-input structure. A task is broken down into manageable steps, and each step corresponds to a separate prompt. The output from one prompt typically becomes the input for the next, creating a dependent sequence. This modular structure allows for intervention and refinement at each stage of the workflow.

This fundamental difference in structure and inputs dictates their use. CoT is monolithic, designed to guide a cohesive thought process in one go. Prompt chaining is sequential and modular, built for tasks that benefit from being divided into discrete, manageable parts, allowing for a more controlled and iterative workflow.

Reliability And Debugging

When it comes to reliability and debugging, both techniques offer unique advantages. With Chain of Thought (CoT), the transparent reasoning process makes it easier to spot where the logic went wrong. By examining the intermediate steps, you can pinpoint the source of an error, which helps in debugging the model's conclusions. This transparency can help reduce the error rate in complex reasoning tasks.

Prompt chaining simplifies error handling by modularizing the task. If an issue arises, you can isolate it to a specific prompt in the chain instead of having to debug a single, complex instruction. This makes the debugging process more targeted and efficient, as you only need to refine the problematic step in the AI workflow rather than starting over.

However, prompt chaining's complexity can introduce its own challenges. A mistake in an early prompt can cascade through the entire sequence, affecting all subsequent outputs. In contrast, an error in a CoT prompt is contained within a single response, which can sometimes make the error handling more straightforward, even if the reasoning process itself is intricate.

Cost, Latency, And Token Use

Cost, latency, and token use are critical factors when choosing between these two methods. Chain of Thought (CoT) prompting can be more token-intensive for a single API call because the AI model generates both the reasoning steps and the final answer. This longer, more detailed output increases the token use and can lead to higher latency as the model "thinks" for longer before responding.

On the other hand, prompt chaining involves a sequence of prompts, meaning multiple API calls. While each individual call might be faster and use fewer tokens, the cumulative cost and total latency can be higher. The total token use across the entire chain may exceed that of a single, complex CoT prompt, especially for multi-stage tasks.

Ultimately, the more cost-effective option depends on the task. For problems that can be solved with a clear line of reasoning, a single CoT prompt might be more efficient. For complex workflows requiring iterative refinement, prompt chaining's higher number of API calls might be a necessary trade-off for improved control and output quality.

How To Put Prompt Chaining And Chain Of Thought Into Your Content Workflow?

Integrating prompt chaining and Chain of Thought (CoT) into AI workflows enhances content creation. Begin by dividing your project into manageable subtasks—this forms the basis of prompt chaining. For tasks needing deeper reasoning, apply CoT prompting. This hybrid approach combines prompt chaining’s structure with CoT’s analytical power. Following prompt engineering best practices ensures an efficient, effective workflow that leverages both techniques.

1. Break Tasks Into Steps

The first step in implementing a hybrid approach is to deconstruct your larger goal into manageable steps. This is the core principle of problem-solving and the foundation of an effective prompt chain. By breaking a complex task into smaller steps, you allow the AI to focus on one specific aspect at a time, which generally leads to higher-quality outputs.

For example, if your task is to write a comprehensive blog post, you wouldn't ask the AI to do it all at once. Instead, you would create a sequence of prompts to guide the process. Each step in your prompt chain should be a distinct and logical part of the overall project.

A typical breakdown for a blog post might look like this:

- Research the topic and gather key information and statistics.

- Create a detailed outline with H2s and H3s.

- Write the content for each section based on the outline.

2. Plan Prompt Handoffs

Once you've broken your task into steps, you need to carefully plan the prompt handoff between each stage. A smooth handoff is crucial for maintaining context and ensuring the sequence of prompts works as a cohesive unit. The output of one prompt must be structured to serve as a clean and effective input for the next.

To achieve this, focus on clarity and relevance. The information passed from one step to the next should be concise and contain only what is necessary for the subsequent task. Overloading a prompt with irrelevant details from a previous step can confuse the AI and lead to errors in your AI workflows for content creation.

Here are some tips for effective prompt handoffs:

- Map Output to Input: Explicitly design the output of one prompt to fit the input requirements of the next.

- Avoid Information Overload: Keep the handoff focused on the essential information needed to proceed.

- Maintain Consistency: Ensure the tone, style, and key terminology remain consistent throughout the chain.

3. Add Guided Reasoning

While prompt chaining provides the structure, you can infuse it with the power of guided reasoning by incorporating Chain of Thought (CoT) principles within individual steps. When a particular subtask involves complex problems or requires analytical depth, use CoT reasoning to guide the model. This means asking the AI to "think step-by-step" for that specific part of the chain.

For instance, when developing the main arguments for a blog post, you could use a prompt that asks the model to outline its reasoning step for each point. This encourages a more thorough and logical exploration of the topic, rather than just a superficial statement. This hybrid approach allows you to get the best of both worlds.

By strategically adding CoT reasoning at critical junctures in your prompt chain, you can significantly enhance the quality and depth of the content. This is especially useful for tasks that involve data analysis, strategic decision-making, or explaining intricate concepts where a clear reasoning step is essential.

4. Test And Iterate Fast

Effective implementation requires rapid and continuous iterative refinement. Don't expect your prompt chain to be perfect on the first try. The key is to test your workflow, analyze the output quality, and make adjustments quickly. Since the task is broken into manageable subtasks, you can isolate and tweak individual prompts without overhauling the entire system.

Start by running the full chain and evaluating the final output. If it's not meeting your expectations, work backward to identify the weak link. Is an early prompt providing poor input for the next step? Is a particular instruction too vague? Experiment with different phrasings, add more context, or adjust the scope of what you're asking for in each prompt.

Here are strategies for successful iteration:

- Test Prompt Variations: Experiment with different wording and examples to optimize each prompt's performance.

- Adjust the Chain Structure: If outputs are inconsistent, consider reordering steps or adding new ones for clarity.

- Refine Output Scope: Modify what you ask for in each prompt to get the right level of detail.

5. Use Workflow Tools

To effectively manage both prompt chaining and CoT prompting, leveraging specialized workflow tools is essential. Platforms designed for building AI applications can streamline the entire process, from structuring prompt chains to integrating different AI models. These tools provide the framework needed for sophisticated prompt engineering without requiring extensive manual effort.

For example, tools like the IBM watsonx Developer Hub or AirOps offer features that support both techniques. They allow you to break tasks into individual steps for prompt chaining and can maintain context for complex reasoning. Using such platforms helps manage the complexity of multi-step AI workflows, centralize knowledge, and connect with various data sources and AI models seamlessly.

These workflow tools are invaluable for scaling your content generation efforts. They provide the necessary infrastructure to build, test, and deploy robust AI-powered processes, ensuring that you can consistently produce high-quality content while optimizing for efficiency and cost.

Chain of Thought vs Prompt Chaining: What Are the Ideal Use Cases of Each?

Choosing between Chain of Thought (CoT) and prompt chaining depends entirely on the task at hand. Their ideal use cases are distinct, and understanding them is key to maximizing the effectiveness of your AI applications. CoT is best suited for tasks that demand deep logical reasoning and a transparent thought process to arrive at a single, accurate answer.

In contrast, prompt chaining shines in multi-stage projects like content creation, where a task can be broken down into sequential steps. It excels at iterative processes that require refinement at each stage. Understanding when to use each method allows you to apply the right technique for the right job, leading to better outcomes.

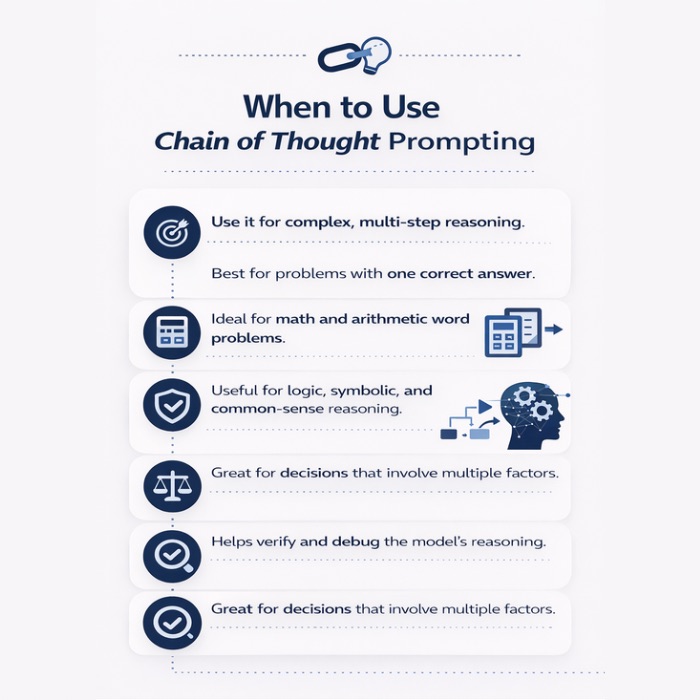

When to Use Chain of Thought Prompting?

You should use Chain of Thought (CoT) prompting for tasks that require complex reasoning and where understanding the model's thought process is crucial. It is particularly effective for solving complex problems that have a single correct answer and benefit from a clear, step-by-step logical path. For these types of tasks, CoT is generally better for complex reasoning than prompt chaining.

This technique is ideal for scenarios involving mathematical calculations, logic puzzles, and symbolic reasoning. By forcing the model to articulate its reasoning process, CoT helps ensure that the final answer is not just a guess but the result of a sound, verifiable procedure. It enhances transparency and allows you to debug the AI's logic if it makes a mistake.

Use Chain of Thought for:

- Arithmetic and Math Word Problems: Guiding the model through calculations step-by-step reduces errors.

- Symbolic and Common-Sense Reasoning: CoT helps the model navigate abstract or nuanced logical challenges.

- Complex Decision-Making: When a decision requires weighing multiple factors, CoT can surface the reasoning behind a recommendation.

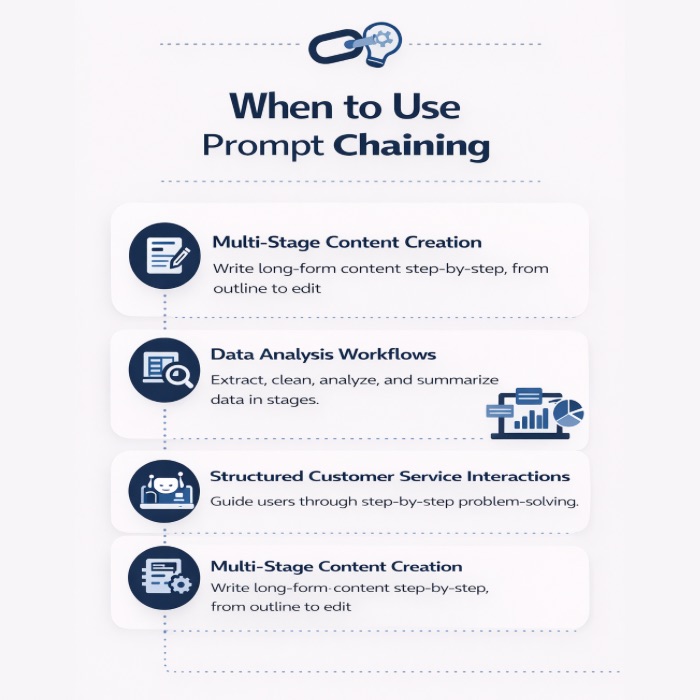

When to Use Prompt Chaining?

You should use prompt chaining when a task is too large or complex to be handled by a single prompt and can be naturally broken down into a sequence of manageable steps. This method is perfect for workflows that require iterative development, review, and refinement at each stage. Using a series of simpler prompts often yields better results than one convoluted one.

This approach excels in content creation, where you might have separate steps for outlining, drafting, content editing, and fact-checking. It is also highly effective in customer service applications, where a chatbot can guide a user through a problem-solving process one step at a time. The modular nature of prompt chaining makes it easier to control quality and troubleshoot issues.

Use prompt chaining for:

- Multi-Stage Content Creation: Writing reports, articles, or marketing copy where each section builds on the last.

- Data Analysis Workflows: Tasks like extracting data, cleaning it, analyzing it, and then summarizing the findings.

- Structured Customer Service Interactions: Guiding a customer through a series of questions to diagnose and solve a problem.

Conclusion

In summary, understanding the differences between chain of thought prompting and prompt chaining is crucial for optimizing your AI-generated content. Both techniques have their unique strengths and ideal applications, and leveraging them effectively can lead to better reasoning processes and improved results. By incorporating these strategies into your content workflow, you can enhance productivity and creativity while reducing errors. As you explore these methodologies, you’ll gain more clarity on when to use each approach, ultimately enabling you to create refined and impactful outputs. If you're eager to elevate your content strategy, don't hesitate to reach out for a consultation to learn how to effectively implement these techniques in your projects.

Frequently Asked Questions

What is the main difference between chain of thought and prompt chaining?

The main difference is that chain of thought prompting uses a single prompt to encourage a model's internal reasoning process for complex problem-solving. In contrast, prompt chaining uses a sequence of multiple prompts to break down a task into manageable steps, creating structured AI workflows for iterative development.

Is chain of thought prompting better for complex reasoning than prompt chaining?

Yes, chain of thought prompting is generally better for complex problems that require deep logical reasoning. It guides the artificial intelligence to articulate each reasoning step, leading to more accurate and transparent conclusions, which is ideal for tasks where the thought process itself is critical to the solution's validity.

How does prompt chaining improve AI-generated results?

Prompt chaining improves output quality by breaking a complex task into a series of prompts. This prompt engineering method allows the AI to focus on manageable steps one at a time. This modular approach leads to more focused, refined, and higher-quality results compared to a single, overly complex prompt.

When should I use prompt chaining instead of chain of thought prompting?

Use prompt chaining for multi-stage tasks like content generation or complex AI workflows that benefit from being broken down. Using simpler prompts in a sequence allows for easier debugging, reduces the error rate at each stage, and provides more control over the final output compared to a single-shot approach.

Are there any limitations to using chain of thought prompting with LLMs?

Yes, limitations of chain of thought prompting include increased computational cost and latency, as the AI model generates longer responses with intermediate steps. The effectiveness also heavily relies on the model's inherent reasoning capabilities and the quality of the prompt, as a flawed reasoning process can lead to incorrect conclusions.

Is prompt chaining a good way to mitigate hallucinations?

Prompt chaining can be an effective strategy to reduce hallucinations by providing context and structure. By linking prompts together, users can guide the model's responses more effectively, leading to improved accuracy and coherence in outputs while minimizing irrelevant or misleading information.

Few shot prompting vs chain of thought: What’s the difference?

Few shot prompting improves outputs by showing the model a few examples to copy, while chain of thought focuses on step-by-step reasoning to solve complex tasks. In practice, prompt chaining often adds chain of thought monitorabilityby separating steps you can review, test, and refine.

.jpg)

.webp)