What Are the Different Types of Prompts in AI Applications?

Key Highlights

- Mastering different types of prompts is crucial for unlocking the full potential of AI models.

- Effective prompts are achieved through various prompt engineering techniques tailored to specific goals.

- Direct, few-shot, and chain-of-thought prompts are designed for different tasks, from quick answers to complex reasoning.

- A strategic approach to prompting involves understanding the task's complexity and the desired output.

- Avoiding common mistakes like vagueness and lack of testing is one of the best practices for getting better results.

- The right prompt engineering techniques ensure AI generates accurate, relevant, and high-quality content.

In the evolving landscape of artificial intelligence, the quality of your interaction with an AI model dictates the quality of its output. This is where prompt engineering techniques become indispensable. Crafting the right prompt is both an art and a science, guiding AI to produce responses that are accurate, relevant, and aligned with your intent. Whether you're generating creative content or analyzing complex data, understanding different prompt types allows you to tailor your instructions to the AI and your target audience, ensuring optimal results every time.

Understanding the Role of Prompts in AI Applications

Prompts are the foundational instructions that guide an AI, serving as the bridge between human intent and machine execution. They are the key elements in any interaction, providing the context and direction necessary for the AI to perform both simple and complex tasks. Effective prompt engineering techniques are vital for shaping the AI's response to match your desired output.

By mastering different prompting methods, you can significantly influence an AI's performance. The main AI prompt types, such as direct, few-shot, and chain-of-thought, differ in how they structure information and guide the model. Let's explore what a prompt is and how it shapes AI-generated content.

What Is a Prompt?

At its core, a prompt is an input or query you provide to an AI model to elicit a response. It acts as a directional stimulus, guiding a large language model (LLM) toward a correct response. Think of it as giving instructions to a highly capable assistant; the clarity and quality of your instructions directly impact the outcome.

Prompts can range from a simple question to a detailed set of commands. For instance, a direct prompt gives explicit instructions, like "Summarize this article in three bullet points." In contrast, an indirect prompt might be more open-ended, such as "Tell me about the universe," encouraging creative and interpretive answers. The structure of the prompt is crucial for defining the task and setting the context for the AI model.

Ultimately, the goal of a prompt is to leverage the AI's pretrained knowledge and generative capabilities to produce an output that meets your specific needs. Techniques like directional stimulus prompting can even nudge the AI toward a particular tone or perspective, further refining its response.

How Does Prompts Shape AI Outputs and Performance?

The way you structure a prompt fundamentally shapes the AI's output and overall performance. Effective prompts provide clear context and constraints, which guide the model toward better results. The more specific your instructions, the more likely the AI will generate the precise type of output you need for your desired task.

Instruction-based prompts in generative AI tools work by giving the model a clear command to follow. For example, a prompt like "Write a short story about a detective who solves a case in a futuristic city" provides the AI with a genre, character, setting, and plot point. This level of detail removes ambiguity and steers the model's creative process in a specific direction.

Without well-crafted prompts, an AI might produce generic, irrelevant, or even incorrect information. By defining the task, format, and tone, you control the model's focus, ensuring the generated content is not only accurate but also useful and aligned with your objectives.

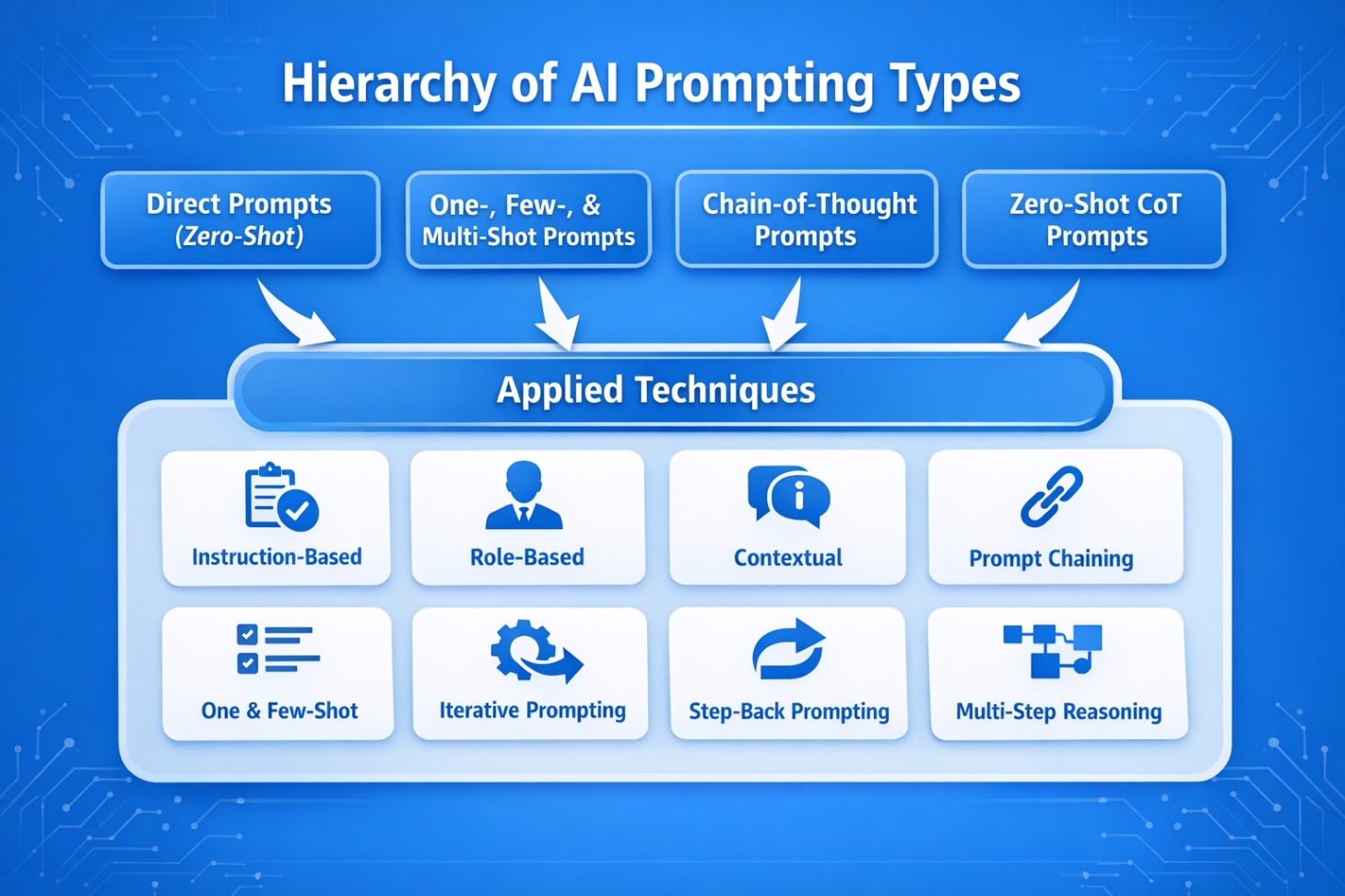

What Are The Main Types Of Prompts In AI?

Prompts are the instructions you give an AI model to shape what it outputs. In practice, most prompting falls into four core types that vary based on two things: whether you provide examples and whether you guide the model to reason more deliberately. Once you understand these categories, you can pick the right prompt structure for everything from quick content drafts to complex decision-making.

This is also the easiest way to make sense of the different types of prompting in AI and the different types of prompts in prompt engineering without getting overwhelmed by buzzwords.

1. Direct Prompts (Zero-Shot)

Direct prompting is the simplest and fastest method. You give the model a clear instruction with no examples, and it responds based on its general training. This works best when the task is straightforward, you are exploring ideas, or you want a strong first draft to refine.

a. Clear Task Instruction

Be explicit about the task, output format, length, and constraints so the model has fewer chances to guess. Mention what the response should include, what it should avoid, and how it should be structured. This is the easiest way to reduce generic outputs.

b. Role And Tone Framing

Assigning a role helps the model choose the right depth and vocabulary, while tone guidance keeps it consistent with your audience. For example, “Act as a technical SEO lead” will produce a different response than “Explain it like I’m new to SEO.”

c. Context Injection

Context makes the output feel tailored instead of templated. Add details like your audience, your goal, your product, and any non-negotiables. Even a single sentence of background can dramatically improve relevance and reduce filler.

2. One-, Few-, And Multi-Shot Prompts

Shot-based prompting means you teach the model by showing examples. Instead of only describing what you want, you demonstrate the pattern. This is one of the best approaches when you need consistency across repeated outputs like meta tags, FAQs, product copy, classifications, or structured summaries.

a. One-Shot Example Guidance

One example gives the model an anchor for style, structure, and level of detail. This is useful when you want to enforce a specific format quickly, like turning long text into a short infographic blurb or rewriting copy in a set brand voice.

b. Few-Shot Pattern Learning

A few examples help the model generalize the pattern and handle variation more reliably. This is ideal when the task has multiple acceptable inputs but one expected output style, like labeling search intent, categorizing topics, or generating titles that match your exact format rules.

c. Multi-Shot For Higher Consistency

More examples become valuable when you have edge cases, strict formatting rules, or you are building a repeatable workflow. Multi-shot prompts are common in automation and templated content production where you want predictable output every time, even across different products or page types.

3. Chain-Of-Thought Prompts

Chain-of-thought prompting encourages more structured reasoning. It is most helpful when the model must compare options, follow constraints, diagnose issues, or produce a plan instead of a simple answer. It can improve quality for complex tasks, although you do not always need the model to display every reasoning step to benefit from better thinking.

a. Step-By-Step Reasoning

This format asks the model to work through the problem in logical steps before delivering the conclusion. It helps when the task involves prioritization, tradeoffs, or multiple constraints, such as choosing the best strategy, comparing tools, or building a workflow.

b. Step-Back Prompting

Step-back prompting forces a quick “zoom out” before the solution. The model first explains the broader concept or decision principles, then applies them to your case. This reduces shallow advice and helps the output stay aligned with the real goal, not just the surface-level question.

c. Multi-Step Reasoning

Here, you break one request into stages like plan, draft, refine, and validate. It is especially useful for content and strategy work where the best outcome comes from iteration, such as creating an outline, expanding sections, tightening tone, and adding SEO elements.

4. Zero-Shot Chain-Of-Thought Prompts

Zero-shot chain-of-thought is a hybrid approach. You do not provide examples, but you still nudge the model to reason more carefully than a typical direct prompt. This is useful when you want speed, but also want to reduce mistakes in tasks that are easy to rush.

a. “Think Step By Step” Cue

A short instruction like “think step by step” can lead to more careful answers, especially for analysis, ranking, or decision-making. It works well when the prompt could otherwise produce a surface-level response.

b. Self-Check And Verification

Add a quick instruction to review the output before finalizing. This helps catch repeated points, missing requirements, inconsistent formatting, and weak logic. It is particularly helpful for content briefs, comparison sections, and anything with strict constraints.

c. Structured Reasoning Format

Requiring a specific output format forces clarity. When you ask for a checklist, decision matrix, ranked list with justification, or pros and cons, you guide the model toward organized thinking and make the result easier for readers to scan and use.

Want a shortlist of the best platforms this year? Read what are the 10 best AI SEO tools in 2026 to compare top tools by features, pricing, and real-world use cases.

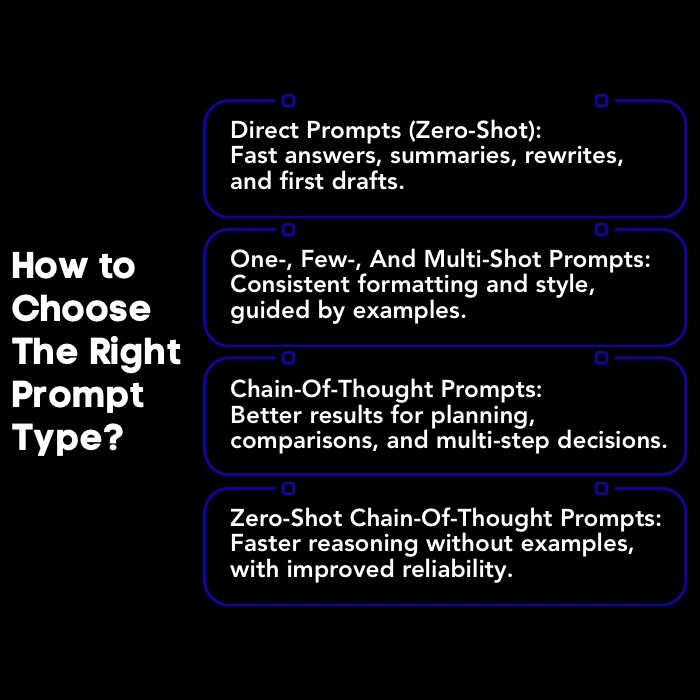

Which Type Of AI Prompting Should You Choose For Your Project?

Choosing the right type of prompt requires a strategic approach that aligns with your project's goals. Your decision should be based on the complexity of the specific task and the nature of the desired output. Different prompt engineering techniques are suited for different scenarios, from generating quick ideas to solving multi-step problems.

1. Direct Prompts For Quick Answers And First Drafts

Direct prompts, or zero-shot prompts, are used to get quick, straightforward answers from an AI. This method gives a clear command without examples, relying on the model's existing knowledge. They're ideal for tasks like summarization, translation, or drafting content—such as "Translate 'Hello, how are you?' into Spanish."

Direct prompts are simple and efficient, making them perfect when you need fast results with a clear objective. They form the foundation of many AI interactions, offering reliable outputs with minimal setup.

2. One-, Few-, And Multi-Shot Prompts For Consistent Formatting

When you need consistent formatting or want to teach an AI a specific style, few-shot prompts are incredibly effective. Unlike zero-shot prompts which provide no examples, this method includes one or more examples of input-output pairs to guide the model. These examples of prompts help the AI learn the task in context, leading to better prompts and more accurate responses.

For instance, if you want an AI to classify customer feedback, you could provide a few examples:

- Text: "I think the vacation was okay." Sentiment: "Neutral"

- Text: "The product is amazing!" Sentiment: "Positive"

- Text: "I am very disappointed with the service." Sentiment: "Negative"

By recognizing patterns, the model learns to classify new text consistently. This approach is especially helpful for complex tasks where instructions alone may fall short. Clear, representative examples help the model generalize and deliver reliable results.

3. Chain-Of-Thought Prompts For Complex Decisions And Strategy

For complex tasks requiring reasoning, chain-of-thought (CoT) prompting is highly effective. Rather than asking for a direct answer, CoT prompts guide the AI to break problems into logical steps, resulting in more thorough and accurate responses. Simple questions may fail, but instructing the model to "think step by step" encourages better problem-solving and improves accuracy.

CoT prompts enhance multi-step reasoning, making them valuable for strategic planning, problem-solving, and decision-making. This technique unlocks deeper analytical abilities in large language models.

4. Zero-Shot Chain-Of-Thought Prompts For Faster Yet More Reliable Reasoning

Zero-shot chain-of-thought (CoT) prompting combines the simplicity of zero-shot prompts with step-by-step reasoning. It tells AI tools to "think step by step" without giving examples, making it faster than few-shot methods while still improving reliability. For instance, adding “Let's think step by step” to a complex question encourages the model to explain its reasoning before answering.

Unlike few-shot prompting, which needs specific examples, zero-shot CoT works well for logical tasks that don’t require detailed guidance. This approach balances speed and accuracy, making it practical for everyday use.

Want to streamline how you track AI visibility and performance? Read which AI monitoring tools can improve your workflow in 2025 to find the best options for monitoring, alerts, and reporting.

What Are Some Common Mistakes To Avoid When Creating Different Types Of Prompts?

Even with the best prompt engineering techniques, common pitfalls can lead to subpar results. Crafting effective prompts is an iterative process, and knowing what to avoid is as important as knowing the best practices. Mistakes often stem from a lack of clarity, insufficient context, or a failure to test and refine.

1. Being Too Vague About The Outcome

A common prompting mistake is being too vague about the desired output. AI models perform best with specific instructions. Ambiguous prompts force the AI to guess your intent, often resulting in generic or irrelevant responses. For example, "Write about climate change" is too broad, while "Write a 500-word blog post explaining the effects of climate change on coastal communities for a general audience" provides clear direction. Precise prompts clarify format, length, topic, and audience, ensuring the output meets your needs and reduces time spent on revisions.

2. Skipping Constraints And Format Rules

Another frequent error is neglecting to include constraints and format rules in your prompt. Without these guardrails, the AI model has too much freedom, and the output may not fit your needs. You might get a response that's too long, too short, or in a completely different format than you intended.

When crafting a prompt, be explicit about your requirements. Specify the desired length, such as "in 200 words" or "in three paragraphs." Define the type of output you want, whether it's a bulleted list, a table, an essay, or a JSON object. These format rules are critical for structuring the AI's response.

For example, a prompt that says, "List the pros and cons of remote work in a two-column table," gives the AI model clear formatting instructions. By providing these constraints upfront, you ensure the generated content is well-organized and immediately usable for your purpose.

3. Using Too Few Or Poor Examples In Few-Shot Prompts

Example-based prompting, or few-shot prompting, is powerful when used correctly, but it can fail if your examples are weak or insufficient. This technique relies on providing the AI with sample input-output pairs to teach it a task. If the examples of prompts are poorly chosen, unrepresentative, or too few in number, the AI will struggle to understand the pattern.

The key elements of successful few-shot prompts are quality and relevance. Your examples should clearly demonstrate the desired task and cover a range of scenarios. For instance, if you're teaching sentiment analysis, include examples for all possible sentiments:

- Make sure examples are clear and unambiguous.

- Provide a diverse set of examples to avoid bias.

- Ensure the format is consistent across all examples.

Using high-quality, relevant examples leads to better prompts and more accurate results. This method is best used for complex or nuanced tasks where a direct instruction alone isn't enough to guide the model effectively.

4. Overloading The Prompt With Unnecessary Context

Providing too much irrelevant information can confuse the AI and reduce response accuracy. Overloading prompts with unnecessary details is a common mistake that dilutes your main instruction. Effective prompt engineering means giving only enough context to clarify the task, no more. Before adding information, ask if it directly helps the AI understand your request. Irrelevant context is just noise.

For example, when requesting a report summary, you don’t need the company’s full history. Stick to concise, relevant details for better results: a clear, focused prompt works best. Keep it simple and direct.

5. Not Testing And Iterating On Prompt Performance

A common mistake with AI is assuming your first prompt will work perfectly. Prompt engineering is iterative; testing and refining prompts is key to better results. The initial AI response is just a starting point.

Review it: Did it meet your needs? What was missing? Use this feedback to adjust your prompt, try new phrasings, add specifics, or switch prompt types. This cycle of testing and improvement helps you master prompt engineering and optimize results for any project.

Want to track how your brand appears in AI answers? Read what are the best AI search visibility tools for AI answers to compare top platforms for citations, mentions, and share of voice.

How Can You Turn Prompt Strategy Into Real AI Visibility With Scalenut?

Understanding different prompting techniques can help you generate better AI outputs. But the bigger question for brands is this: does your brand actually appear inside AI answers?

Scalenut helps bridge that gap. It combines Generative Engine Optimization (GEO) with practical SEO workflows so teams can track how their brand appears in AI responses across platforms like ChatGPT and Perplexity, and then take action to improve visibility.

With Scalenut, you can move beyond prompt experimentation and start measuring how AI systems reference your brand, content, and competitors.

Key capabilities include:

- AI Visibility Tracking: Monitor metrics like Visibility Score, Average Position, and Share Of Voice to understand how often your brand appears in AI-generated answers.

- Prompt-Level Insights: See which prompts trigger your brand mentions and which sources AI models cite to generate responses.

- Competitive Visibility Analysis: Identify competitors frequently mentioned alongside your brand and uncover gaps in coverage.

- AI Traffic Monitoring: Track AI bot visits, top AI sources, and pages most referenced by AI systems.

- GEO Action Recommendations: Discover AI-driven content opportunities, authority signals, and engagement channels that can improve your visibility.

Scalenut also supports execution with built-in SEO tools like Cruise Mode, Content Optimizer, and Keyword Planner, helping you create and optimize content that aligns with both search engines and AI answer platforms.

Book a demo to see how Scalenut can help you grow your brand’s visibility in AI search and answer engines.

Conclusion

Understanding the different types of prompting techniques is crucial for optimizing performance and achieving desired outcomes. By effectively deploying direct, one-shot, few-shot, multi-shot, and chain-of-thought prompts, users can significantly enhance the quality and relevance of AI-generated responses.

Moreover, being mindful of common mistakes, such as vagueness or lack of examples, will further improve your results. As AI continues to evolve, mastering prompt engineering will not only elevate your projects but also position you as a leader in this transformative field. If you're ready to dive deeper and refine your skills, get in touch for a free consultation to explore how you can make the most of AI prompts in your projects.

Frequently Asked Questions

What distinguishes direct from indirect prompts in AI?

Direct prompts give an AI model clear, explicit instructions for a specific task, such as "Summarize this text." Indirect prompts are more open-ended, encouraging creative or interpretive responses, like "Tell me about the future." Effective prompts often depend on whether you need a precise answer or want the AI model to explore a topic freely.

What are the top 3 types of prompting in AI?

The top three prompt engineering techniques in generative AI are direct prompts (zero-shot) for quick tasks, few-shot prompts that provide examples for context, and chain of thought (CoT) prompting, which breaks down complex reasoning into logical steps. Each serves a different purpose, from simple commands to advanced problem-solving.

What are the types of prompts for generative AI?

In a generative AI tool, common types of AI prompting include instruction-based prompts that give direct commands, example-based prompts (few-shot) that provide context, and role-based prompts that assign the AI model a persona, like "You are a helpful tutor." Each type helps shape the AI's response for a specific purpose.

What are the different types of prompts in ChatGPT?

ChatGPT supports various prompt types to facilitate content creation and ensure accurate responses. These include zero-shot prompts for direct questions, few-shot prompts with examples for guidance, and chain of thought (CoT) prompts to encourage step-by-step reasoning for complex problems, leading to more detailed and logical answers.

How Do Role-Based Prompts Help In Guiding AI Responses?

Role-based prompts assign the AI a specific perspective or expertise, such as “SEO expert,” “data analyst,” or “customer support agent.” This helps the model choose the right tone, depth, and terminology, leading to more relevant and targeted responses.

Can You Give Examples Of Context-Setting Prompts For AI Models?

Context-setting prompts provide background details that help the AI tailor its response. For example: “Explain generative engine optimization to a beginner blogger,” or “Write a product description for a Shopify store selling eco-friendly skincare products.” These details help the model produce more relevant outputs.

.jpg)

.webp)