What Is Citation Tracking in LLMs? (A Comprehensive Overview)

Key Highlights

- LLM visibility measures how often AI platforms feature your brand, describe it correctly, and link to your content in AI search.

- Unlike traditional SEO, generative engine optimization focuses on presence and accuracy in AI answers, not just keyword rankings.

- Effective citation tracking involves monitoring brand mentions across multiple platforms like ChatGPT and Microsoft Copilot with a structured, multi-platform approach.

- Key metrics for measuring AI visibility include citation share of voice, mention frequency, sentiment, and prompt coverage.

- Improving your citation rate requires creating answer-first content, strengthening entity signals, and building authority on sites LLMs trust.

- Consistent testing uncovers trends and helps you correct inaccuracies before they shape buyer perceptions.

Your brand can dominate the first page of Google and still be invisible in the AI-generated answers your buyers are now seeking. As more people turn to AI search for product research, the old rules of traditional search are being rewritten. This shift demands a new strategy: generative engine optimization. Understanding how Large Language Models (LLMs) cite brands is no longer optional; it's a critical component of modern marketing. This guide will provide a comprehensive overview of tracking your brand's presence in this new AI-driven landscape.

What Is Citation Tracking In LLMs?

Citation tracking in LLMs is the process of monitoring how, when, and where AI models like ChatGPT reference your brand, content, or products in their generated responses. It measures how often AI systems mention your brand, describe your offerings accurately, recommend you over competitors, and link back to your content as a source. This process is fundamental to understanding and improving your brand visibility in the age of AI search.

If you're asking, "How can I monitor how LLMs reference my brand or content?" the answer lies in systematic tracking. This involves more than occasional checks; it requires a structured approach to LLM optimization. By consistently analyzing these citations, you gain a clear picture of your AI visibility and can identify opportunities to enhance how your brand is perceived and presented by these powerful new information gatekeepers.

Which Platforms Should You Track Citations In?

Your brand might appear prominently in one AI model's response but be completely absent from another. This is because each platform, from ChatGPT and Google Gemini to Perplexity and Microsoft Copilot, pulls from different data sources and uses unique ranking logic. Therefore, effective citation tracking requires a multi-platform strategy to gain a complete picture of your AI visibility rather than a misleading, single-channel snapshot.

To get the most accurate data, you need to monitor the platforms your target audience uses most. For example, B2B tech buyers might prefer Perplexity for its sourced answers, while general consumers often start with ChatGPT. Tracking across the major AI answer engines ensures you see how different systems interpret and present information from various web pages, giving you a holistic view of your brand's standing. This broad approach is crucial for any serious effort to improve your presence in AI-driven discovery.

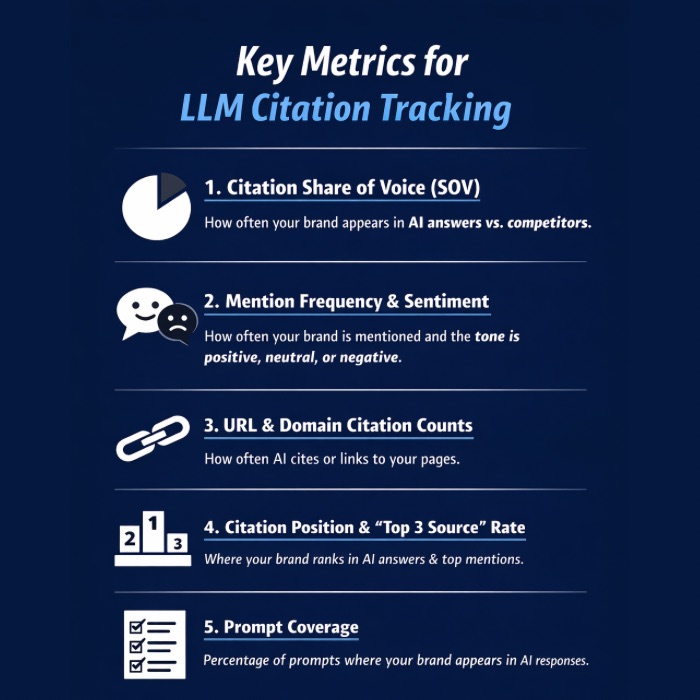

What Metrics Actually Matter For Citation Tracking In LLMs?

To effectively measure AI visibility, move beyond traditional SEO KPIs. LLM citation tracking needs new metrics tailored to AI-generated answers. These signals accurately reflect your brand’s influence across AI platforms and help build a comprehensive search analytics dashboard. By focusing on the right metrics, you turn subjective impressions into actionable insights.

Now, let’s look at the key metrics for a strong citation tracking strategy.

1. Citation Share Of Voice (SOV) By Topic

Brand visibility is only meaningful in context. Citation Share of Voice (SOV) shows how often your brand appears in relevant AI responses compared to your competitors. For example, if your brand appears in 40% of answers for a set of prompts, but a key competitor appears in 75%, you have a significant visibility gap to close. This metric is a cornerstone of your search analytics.

Unlike traditional rank tracking, which focuses on your position in a list of search results, LLM citation tracking measures your presence within the AI-generated answer itself. With LLMs, the primary goal is to be mentioned at all. SOV helps you understand who is dominating AI recommendations for specific topics, revealing competitive threats and opportunities directly on your dashboard.

Calculating SOV involves testing prompts related to your industry and tallying the mentions for your brand and your competitors. This provides a clear benchmark for your AI visibility and helps you set realistic goals for improving your standing in AI-driven conversations.

2. Mention Frequency And Sentiment Signals

Not all brand mentions are created equal. Simply appearing in an AI-generated response isn't enough; the context of that mention is critical. Mention frequency tells you how often you appear, while sentiment signals tell you if the mention is positive, negative, or neutral. Tracking both is essential for effective AI optimization and for understanding your true AI visibility.

AI models generate brand citations by synthesizing information from their training data, which includes a vast array of web content. They look for relevant, authoritative, and well-structured information to answer user prompts. You can automatically track these citations and their sentiment using specialized AI visibility tools. Scoring each mention helps you identify potential problems, such as a high mention frequency paired with negative sentiment scores, which could signal a messaging crisis.

3. URL And Domain-Level Citation Counts

Measuring whether an AI response links back to your content is a crucial part of citation tracking. Some platforms provide direct links (citations), while others only reference a brand by name (mentions). Differentiating between the two provides deeper insights for your search analytics and AI optimization efforts. Tracking the specific URL and domain-level metrics shows which of your pages are seen as authoritative.

Recent research found that brands receiving both a mention and a citation were 40% more likely to reappear in subsequent answers, indicating more stable visibility. This highlights the importance of not just being named but also being sourced. Tools built for tracking AI visibility can automate this process, showing you which domains and pages are most frequently cited.

4. Average Citation Position And “Top 3 Source” Rate

While presence is the first hurdle in LLM visibility, the position of your citation within an answer also matters. Being the first brand mentioned is more impactful than being the last. Average citation position helps you understand where you typically appear in AI-generated lists or comparisons, offering a more nuanced view than simple mention counts.

This metric differs from traditional rank tracking, where you measure a URL's position on a search engine results page. Here, you're measuring your brand's position within the conversational response itself. Looking at your "Top 3 Source" rate, how often you appear among the first three brands mentioned, is a powerful indicator of your perceived authority. Many analytics dashboards can visualize this data.

Monitoring this aspect of citation tracking allows you to see if your optimization efforts are moving you up in the AI's recommendations. Just like with traditional SEO tools, the goal is to improve your rankings over time, but in this case, it's within the AI's narrative.

5. Prompt Coverage

Prompt coverage measures the percentage of your target prompts that trigger a mention of your brand. If you test 20 relevant prompts and your brand appears in 12 of the responses, your prompt coverage or mention rate is 60%. This is the foundational metric for assessing your overall AI visibility and a key component of generative engine optimization.

AI models generate brand citations by pulling from a massive index of information to answer a user's prompt. Your content needs to be seen as a relevant and authoritative source for those specific questions. You can track this automatically through dedicated SEO tools that specialize in AI search analytics, which conduct prompt testing at scale.

A low prompt coverage score indicates that your content isn't aligned with the questions your target audience is asking. It's a clear signal that you need to refine your content strategy to better address real search intent, ensuring your brand is part of the conversation when it matters most.

How To Set Up Citation Tracking In LLMs?

Establishing a repeatable process is key for effective citation tracking. To consistently measure AI mentions of your brand, description accuracy, and competitor standing, you need structured workflows for prompt testing across major search engines and AI models. While SEO platforms can automate this, initial setup requires strategic planning.

Since AI cites sources it deems authoritative and relevant, your tracking should mirror real user queries. The steps below provide a practical framework for building a reliable citation tracking program.

1. Build A Prompt Library Based On Real Search Intent

The foundation of consistent measurement is a structured set of prompts that reflect how your prospects actually ask questions. To monitor how LLMs reference your brand, you must move beyond simple keywords and embrace natural language queries. Start by creating a library of 20–30 prompts covering various stages of the buyer's journey.

Your prompt library should include category discovery queries ("best analytics platform for ecommerce"), product comparisons ("Klaviyo vs HubSpot"), and problem-solution questions ("how to reduce churn for SaaS companies"). Sourcing these prompts from your search analytics, sales team feedback, and customer support logs ensures they align with real search intent. Traditional SEO tools can also help identify long-tail questions.

The more your prompt testing mirrors real-world use cases, the more accurate and actionable your tracking will be. This library becomes your single source of truth for measuring AI visibility and guiding your AI optimization efforts over time.

2. Create Topic Buckets (BoFu, MoFu, ToFu)

Organizing your prompt library into topic buckets based on the marketing funnel, Top-of-Funnel (ToFu), Middle-of-Funnel (MoFu), and Bottom-of-Funnel (BoFu), provides deeper insights. This segmentation helps you understand your visibility at each stage of the buyer's journey, transforming raw search analytics into a strategic map for generative engine optimization.

By bucketing prompts, you can see if your brand has strong awareness but poor consideration, or vice versa. For example, high rankings for ToFu prompts show good initial discovery, but a lack of mentions in MoFu or BoFu prompts could indicate a problem converting interest into purchase intent. This is where LLM citation tracking can directly inform and improve your SEO strategy.

Examples of topic buckets include:

- ToFu (Awareness): "What is project management software?"

- MoFu (Consideration): "Best project management tools for remote teams"

- BoFu (Decision): "Scalenut pricing vs Competitor A"

3. Standardize Test Conditions (Prompt Variants, Same Inputs)

To get reliable data, you must standardize your testing conditions. LLM answers can vary based on small differences in phrasing, so using the exact same prompts for each test is crucial. This consistency ensures that any changes in AI visibility are due to algorithm updates or content changes, not random variations in your inputs.

Your workflows should specify running the same set of prompts across all platforms (ChatGPT, Gemini, etc.) during each testing cycle. AI models generate brand citations based on the precise input they receive, so even minor prompt variants should be tracked as separate tests to understand their impact. This rigorous approach to prompt testing improves the quality of your search analytics.

While you can automate these tests, maintaining standardized inputs is a non-negotiable part of the process. This discipline ensures your prompt coverage data is credible and allows you to make confident, data-driven decisions about your AI optimization strategy.

4. Log Results With Evidence (Links, Screenshots, Dates)

To effectively monitor how LLMs reference your brand, your logging workflows must be meticulous. Documenting every result with concrete evidence is essential for tracking changes over time and identifying inaccuracies. For each prompt tested, log the full AI-generated output, the date of the test, and any source URLs provided.

A simple spreadsheet is a great starting point for this process. Create columns for the prompt, the AI platform used, the response, and a link to a screenshot of the output. This evidence is invaluable for spotting trends, sharing findings with stakeholders, and holding AI platforms accountable for misinformation. This detailed approach to search analytics is a cornerstone of successful AI optimization.

This systematic logging turns your observations into a structured dataset. Over time, this repository becomes a powerful tool for understanding how your content performs and where you need to focus your efforts to improve your citation rate and accuracy.

5. Choose A Tracking Cadence (Weekly Vs Monthly)

LLM responses change frequently due to algorithm updates and new content entering their indexes. A one-time audit is not enough; you need a consistent tracking cadence to spot trends and react quickly. Choosing a regular schedule for your prompt testing workflows, whether weekly or monthly, is key to maintaining a clear view of your AI visibility.

A weekly cadence is ideal for spotting visibility changes early and catching inaccurate answers before they spread widely. This frequency provides the most accurate data on LLM brand visibility, allowing you to measure whether your content updates are improving citations. A monthly cadence may be more practical for smaller teams but can miss short-term fluctuations. Your search analytics dashboard should reflect this chosen cadence.

Factors to consider when choosing a cadence include:

- Team Resources: Weekly tracking requires more time and effort.

- Market Volatility: Fast-moving industries benefit from more frequent checks.

- Reporting Needs: Stakeholders may require weekly or monthly updates.

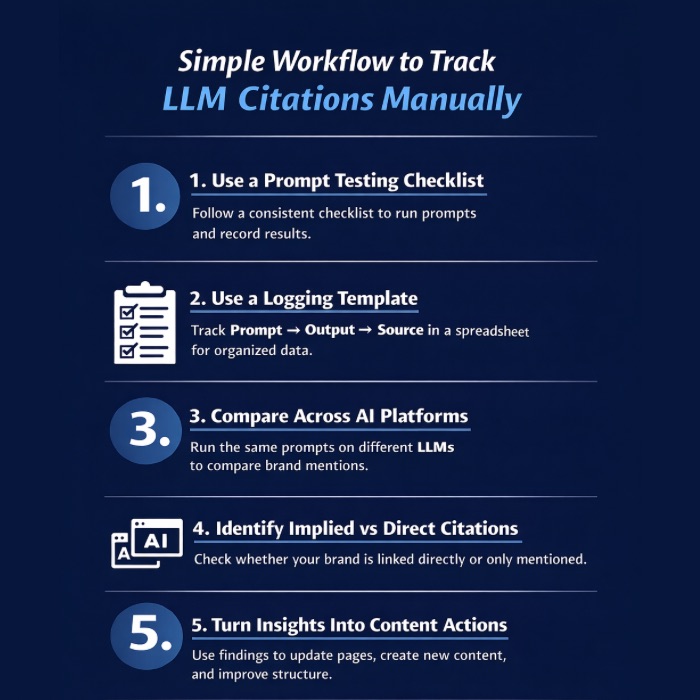

How To Track Citations Manually? (Simple Workflow)

While automated tools provide scale, manual citation checking is a great way to understand LLM responses. Engaging directly with outputs builds intuition about platform behavior. This hands-on approach remains valuable, even if you later use AI-powered tools. A simple manual prompt testing process offers strong search analytics without a large budget.

The steps below outline an easy workflow any team can use to start measuring and improving their presence in AI-generated answers.

1. A Repeatable Prompt Testing Checklist

A structured checklist is the backbone of any repeatable prompt testing workflow. This ensures consistency across every test and makes the process efficient. Your checklist should guide you from selecting a prompt to logging the final result, leaving no room for ambiguity. This systematic approach is fundamental to generating reliable search analytics for AI optimization.

AI models generate brand citations based on a complex mix of factors, so a consistent testing method is the only way to isolate variables and understand what drives your AI visibility. While you can't automatically perform the qualitative analysis, a checklist makes the manual part manageable.

Your checklist should include steps like:

- Select a prompt from your pre-defined library.

- Run the prompt on each target AI platform (e.g., ChatGPT, Gemini, Perplexity).

- Log the complete response, date, and a screenshot for evidence.

- Score the response for mention, accuracy, and sentiment.

2. A Copy-Paste Logging Template (Prompt → Output → Source)

To monitor how LLMs reference your content, a simple and consistent logging template is your best friend. A well-organized spreadsheet removes friction from the citation tracking process and ensures that every piece of crucial information is captured. This template serves as the foundation of your search analytics database, making it easy to review historical data.

The core of the template should be straightforward: one row for each prompt testing instance. The columns should capture the prompt you used, the full text of the AI's output, and the source URL if one was provided. This "Prompt → Output → Source" structure is the minimum viable setup for effective logging.

You can enhance this basic template with additional columns for the date, the AI platform, sentiment score, and notes on accuracy. This turns your simple spreadsheet into a powerful tool for analyzing trends and sharing findings from your prompt testing efforts.

3. Compare Results Across LLMs Fairly

Comparing results across different AI search platforms requires a disciplined approach. Since each search engine and LLM has its own logic, you can't expect identical outcomes. The key to a fair comparison is to use the exact same prompts at the same time across all platforms. This minimizes variables and helps you isolate platform-specific behaviors.

Unlike traditional rank tracking where you compare keyword rankings on a single search engine, LLM citation tracking involves comparing narratives. You are analyzing which brand gets mentioned, in what context, and how that differs between, for example, ChatGPT and Perplexity. Your search analytics should focus on these qualitative and quantitative differences.

When comparing, look for patterns. Does one platform consistently favor a competitor? Does another platform provide more sourced links? These insights are crucial for tailoring your content strategy to the unique preferences of each AI environment, helping you move beyond a one-size-fits-all approach.

4. Spot “Implied” Sources Vs True Citations

A critical skill in manual citation tracking is distinguishing between a true, verifiable citation and an "implied" source. A true citation is a direct link to a URL. An implied source is when an LLM mentions a brand or a fact without providing a direct link, sometimes leading to "hallucinations" or incorrect information. Effective AI optimization depends on recognizing this difference.

AI models generate brand citations by synthesizing information, and sometimes they paraphrase content without attributing it correctly. Spotting these implied sources is important because they still shape user perception, even if they don't drive direct traffic. The best way to verify an implied source is to search for the specific phrase or data point to see if you can find the original content.

This detective work is essential for maintaining accuracy. When you find an implied source that leads to misinformation about your brand, it's a signal to create clearer, more authoritative content on your own site to correct the record in the AI search ecosystem.

5. Turning Findings Into Content Actions

The true value of citation tracking lies in turning your search analytics into concrete content optimization actions. LLM citation tracking can absolutely improve your SEO strategy by revealing content gaps and opportunities that traditional search rankings might miss. Your findings should directly inform your content creation and refresh workflows.

If your tracking reveals that competitors are frequently cited for a specific topic, analyze their content. What questions are they answering that you are not? Is their content better structured? Use these insights to create a superior resource that is more likely to be picked up by AI models. This proactive approach improves both your AI visibility and your authority on the topic.

Actionable steps to take from your findings include:

- Refresh outdated pages: Update high-intent pages that have lost citations.

- Create new content: Address user questions where your brand is not being mentioned.

- Improve content structure: Add clear Q&A sections and structured headings.

How To Improve Your Citation Rate In LLMs?

Tracking your citations is only the first step; the next is to actively improve your citation rate. This is where generative engine optimization moves from analysis to action. Improving your AI visibility involves a targeted content optimization strategy designed to make your content more appealing to AI models. Yes, LLM citation tracking can and should improve your SEO strategy by providing a clear path forward.

1. Publish Answer-First Content For Citation-Friendly Queries

LLMs are answer engines. To improve your citation rate, you must create content that provides direct, clear answers to user questions. This practice, known as answer engine optimization, is a core tenet of improving your performance in AI search. Your search analytics can reveal which "citation-friendly" queries you should target.

This approach can significantly improve your SEO strategy by forcing you to create content that is highly valuable to users and easy for AI to parse. Instead of burying the answer in a long narrative, present it upfront. Use structured formats like lists, tables, and Q&A sections to make information easily extractable.

2. Add Unique Value LLMs Prefer

To stand out in a sea of similar content, you need to provide unique value that LLMs are programmed to seek out. While traditional search rewards comprehensive content, AI search also prioritizes originality, expert insights, and proprietary data. This is a key way LLM citation tracking can improve your overall SEO strategy—by pushing you to create truly differentiated content.

Your content optimization efforts should focus on adding elements that an AI cannot easily replicate by summarizing existing sources. This includes publishing original research, featuring quotes from verifiable experts, or providing detailed case studies with unique data points. This unique value makes your content a destination rather than just another node in the network.

When your search analytics show you're competing on a crowded topic, think about what unique angle you can bring. Can you survey your customers? Can you interview an industry leader? These efforts not only improve your AI visibility but also build your brand's authority.

3. Strengthen Entity Signals

LLMs rely on entity signals to understand who you are, what you do, and how authoritative you are. An entity is any well-defined thing or concept, like your brand, your products, or your CEO. Strengthening these signals is a crucial AI optimization tactic that directly impacts your AI visibility and can improve your SEO strategy as a whole.

Start by ensuring your brand information is consistent across the web—from your website to social media profiles and third-party directories. Use schema markup on your site to explicitly define these entities for search engines. The more consistently and clearly your entity is defined, the more trust an AI model will place in your content.

Your search analytics from various SEO tools can help you identify inconsistencies. Earning brand mentions on reputable industry sites also strengthens your entity signals, as it validates your importance and expertise in your field.

4. Upgrade Content Structure

LLMs can only cite content they can easily parse and understand. A well-structured page is far more likely to be used as a source in AI search than a disorganized wall of text. Upgrading your content structure is a powerful and often overlooked form of content optimization that can significantly improve your AI visibility.

This goes beyond basic on-page SEO. Use a logical hierarchy of headings (H1, H2, H3) to organize your content. Employ lists, tables, and blockquotes to break up text and highlight key information. Your search analytics might not directly measure structure, but you can use SEO tools to crawl your site for clean HTML and fast load times.

Improving your content structure is a tangible way that citation tracking can enhance your SEO strategy. By making your content more accessible to machines, you also inherently make it more readable and user-friendly for humans—a win-win for both AI and traditional search.

5. Build Authority Where LLMs Commonly Pull Sources

LLMs have preferred sources they consider authoritative. Recent research on AI search shows that platforms like Wikipedia, Reddit, Forbes, and YouTube are frequently cited. A key part of your AI optimization strategy should be to build your brand's authority on the platforms that LLMs already trust. This is a core principle of generative engine optimization.

Your search analytics can help you identify which third-party sites are driving competitor citations. If a competitor is consistently cited from a specific industry publication, that's a signal that you need to build your presence there. This could involve guest posting, participating in expert roundups, or getting your products reviewed.

This approach helps improve your SEO strategy by diversifying your backlink profile and building off-page authority. By establishing your expertise on trusted domains, you create powerful external signals that tell LLMs your brand is a reliable source of information.

What Are the Common Citation Tracking Mistakes to Avoid?

As teams quickly adopt citation tracking, common mistakes can weaken their workflows. These errors can distort search analytics, create blind spots, and waste resources in your AI optimization efforts. Avoiding these pitfalls is as crucial as using the right strategies. Issues like testing too few prompts or ignoring competitors can lead to an incomplete view of your AI visibility. Recognizing these traps will help you establish a stronger, more reliable tracking process from the start.

1. Measuring Too Few Prompts

One of the most common mistakes in citation tracking is using an insufficient number of prompts. Relying on a handful of broad, generic prompts like "best CRM" will give you a skewed and incomplete view of your AI visibility. To effectively monitor how LLMs reference your brand, your prompt testing must cover a wide range of real user queries.

Real buyers use specific, long-tail questions when they use AI search. Your prompt library should reflect this diversity, covering different stages of the funnel and various use cases. A small sample size leads to unreliable search analytics and can cause you to miss significant gaps or opportunities.

Aim for a library of at least 20-30 carefully selected prompts, and ideally more, to get a statistically meaningful baseline. This comprehensive approach ensures your tracking efforts accurately represent your brand's performance across the wide spectrum of potential user interactions.

2. Confusing Mentions With Citations

Not all brand appearances are the same. A "mention" is when an AI simply names your brand. A "citation" is when it provides a direct, clickable link to your content. Confusing the two is a frequent error in citation tracking that can distort your search analytics. While both contribute to AI visibility, they serve different functions.

AI models generate brand citations to back up their claims with sources, driving traffic and lending authority. Mentions, on the other hand, build awareness but don't offer a direct path to your website. You can track both automatically, and it's important to segment your data to understand the difference. A high number of mentions with few citations might indicate a need to create more source-worthy content.

Furthermore, a mention can have positive or negative sentiment scores, while a citation is generally a positive signal. Differentiating them allows for a more nuanced understanding of how your brand is being portrayed.

3. Ignoring Prompt Variants And Follow-Ups

Focusing only on a static list of initial prompts is a major blind spot in many tracking workflows. Real conversations with AI search are dynamic; users ask follow-up questions and rephrase their prompts. Ignoring these prompt variants means you're missing a large piece of the puzzle and limiting the effectiveness of your AI optimization.

A user might start with a broad query and then narrow it down, or ask for alternatives. AI models generate brand citations based on this conversational context. Your search analytics should account for these follow-up interactions to get a full picture of your prompt coverage.

4. Reporting Metrics Without Decisions

Collecting data is useless if it doesn't lead to action. A common failure is creating impressive dashboards full of metrics that are never used to make decisions. Your search analytics from prompt testing should be a tool for change, not just a report to be filed away. This is a key reason why some LLM citation tracking programs fail to improve SEO strategy.

Every metric on your dashboard should be tied to a potential action. If your sentiment score drops, what is your plan to address it? If a competitor's share of voice increases, what content will you create to fight back? This action-oriented mindset transforms tracking from a passive activity into a strategic driver of AI optimization.

Before you even begin tracking, define what you will do based on different outcomes. This ensures that your efforts are focused on improving performance, not just admiring data points.

5. Not Tracking Competitors For Context

Tracking your own brand's AI visibility in a vacuum is like watching a race with only one car on the track. Without tracking your competitors, your data lacks context and meaning. A 60% mention rate might seem good, but not if your main competitor has a 90% mention rate.

The most accurate tracking tools provide competitive analysis features, allowing you to build a dashboard that shows your performance relative to others. This competitive context is essential for understanding your true position in the market and identifying where you are falling behind. Your search analytics are incomplete without this crucial layer.

Make competitor tracking an integral part of your citation tracking program from day one. Identify your key competitors and monitor their visibility with the same rigor you apply to your own brand. This will reveal their strategies and highlight opportunities for you to gain an edge.

Scalenut: Turn Citation Insights Into Real GEO Wins

Tracking citations is useful, but the real value comes from turning those insights into meaningful content improvements. The best SEO platforms today don’t just show dashboards; they help you act on the data. If your goal is to increase brand mentions across platforms like ChatGPT, Google AI Overviews, and Perplexity, Scalenut helps bridge the gap between insights and execution.

Instead of stopping at monitoring citation patterns, Scalenut connects AI visibility signals with clear next steps. This allows teams to identify opportunities, update existing pages, and publish new content that is more likely to be referenced in AI-generated answers.

How Scalenut Supports Your AI Citation Strategy

- AI Visibility Insights Aligned With Real Queries: Understand how your brand appears in AI responses and identify prompts or topics where your visibility is limited.

- Content Planning Built for Authority: Turn citation gaps into a structured content roadmap with hub pages, supporting articles, and targeted updates.

- Faster Content Execution: Move from research to briefs to optimized drafts in one platform so improvements can be published quickly.

- Stronger Topical Authority: Build stronger internal connections across your content to help search engines and AI systems understand your expertise.

If you're looking to improve AI visibility and strengthen your citation footprint, Scalenut helps turn insights into a consistent optimization workflow. Book a demo today!

Conclusion

Citation tracking in LLMs is a crucial aspect of enhancing your content strategy and SEO efforts. By understanding how to set up effective tracking systems and avoiding common pitfalls, you can significantly improve your visibility and authority in the digital landscape. As you implement the strategies discussed, remember the importance of continually refining your approach based on the data you gather. This will not only help you stay ahead of the competition but also ensure that your content remains relevant and impactful. For expert assistance in optimizing your citation tracking processes, don't hesitate to reach out for a free consultation.

Frequently Asked Questions

Is manual citation checking still necessary if you use AI tools?

Yes, manual citation checking remains valuable even when using automated SEO tools. It helps you understand the qualitative nuances of AI search responses that automated workflows might miss. A hybrid approach combining the scale of AI tools with the insight of manual review is ideal for a comprehensive AI optimization strategy.

What are the best tools for tracking brand citations across LLMs?

The best tools for tracking brand citations are dedicated AI visibility platforms. While some traditional SEO tools are adding features, specialized platforms provide more robust search analytics for citation tracking. They offer automated prompt testing, competitive analysis, and sentiment scoring specifically designed for the AI-driven landscape.

Can you explain the difference between LLM citation tracking and rank tracking?

Traditional rank tracking measures your URL's position on a search results page for specific keywords. In contrast, LLM citation tracking measures your brand's presence and accuracy within the body of an AI-generated answer. It's a core component of generative engine optimization, focusing on narrative inclusion rather than a list of rankings.

Is there a way to analyze the sources of brand citations in LLM-generated outputs?

Yes. Many AI search platforms, like Perplexity, provide a direct URL for their sources. For others, you can analyze the output for "implied sources" by searching for specific phrases or data points to trace them back to the original content. This analysis is a key part of AI optimization.

Can LLM citation tracking help improve my SEO strategy?

Absolutely. LLM citation tracking improves your SEO strategy by revealing content gaps and audience questions your traditional keyword research might miss. The search analytics from tracking push you to create higher-quality, answer-focused content, which benefits your AI visibility and traditional search rankings alike, forming a holistic optimization loop.

.jpg)

.webp)